We’d thought we’d covered this before, and it was one year ago yesterday that we did a post about Twitter experimenting with a new feature that would scan your tweet for language that could be harmful and put up a prompt to see if you really meant to send it in the heat of the moment.

A year later, that feature is apparently being rolled out, according to NBC News.

Twitter says it’s releasing a feature that automatically detects "mean" replies on its service and prompts people to review the replies before sending them. https://t.co/JbnKOXb94o

— NBC News (@NBCNews) May 5, 2021

The tech company said Wednesday it was releasing a feature that automatically detects “mean” replies on its service and prompts people to review the replies before sending them.

“Want to review this before Tweeting?” the prompt asks in a sample provided by the San Francisco-based company.

Twitter users will have three options in response: tweet as is, edit or delete.

…

In the tests, it found that if prompted, 34 percent of people revised their initial reply or did not reply at all. After being prompted once, people composed on average 11 percent fewer offensive replies in the future, according to the company.

The tests helped to train Twitter’s algorithms to better detect when a seemingly mean tweet is just sarcasm or friendly banter, the company said.

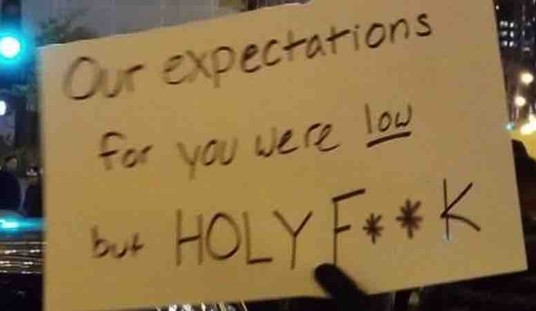

Can Twitter’s algorithm tell if this is sarcasm? We think this is a great idea and we’re glad Twitter is policing our speech before we “say” it.

LOLOL https://t.co/eLv6vrnfwN

— Dana Loesch (@DLoesch) May 6, 2021

The sissification of our society cannot be understated.

— Chad Hassler (@chadhassler) May 6, 2021

What grade is @twitter in?

— Todd D. Johnson (@ToddDJohnson1) May 6, 2021

Great! A way to ensure that my "mean" replies are "mean" enough!

— Shameless One (Supreme Being or Smartass…pick 1) (@ShamelessOne2) May 6, 2021

is "GFY" mean ?

— For All Intents and Porpoises (@daveweiss68) May 6, 2021

No more mean tweets lol.

— Larry M Lawrence (@lmlawrence891) May 6, 2021

https://twitter.com/Kilroy02568325/status/1390260816646217728

https://twitter.com/TiredDoc1/status/1390136097322246146

I already was mean "detected" responding to Meghan McCain🤣

— DavidDornsLifeMattered (@FortifiedElect1) May 6, 2021

https://twitter.com/matthewbostian/status/1390280553409175553

I’d love to know the definition of “mean.” It’s a damn subjective term.

— Scott MacDonald (@pickledopinion) May 6, 2021

I expect a dramatic rise in sarcastic replies.

— Hugh Odom (@Hughlysses) May 6, 2021

I may not ever be able to tweet again

— Stevo1962 (@stevo1962) May 6, 2021

I'm Southern, I know the delicate art of being mean in a nice way. Lol

— Sarah Le Chat 🇺🇸 (@TNVoteRed777) May 6, 2021

Well, bless your heart.

Is this replacing shadowbanning or in addition to it (he said from behind a shadowban)

— 𝐄𝐫𝐢𝐤 𝐑𝐮𝐬𝐬𝐞𝐥𝐥 (@rikoruss31) May 6, 2021

Twitter servers after launch of feature: pic.twitter.com/A8Jka4OXwT

— Walter Watson (@crazywalt77) May 6, 2021

Gonna be a really busy feature.

— Easier to vote, harder to cheat. (@robert_bambrick) May 6, 2021

Keith Olbermann most affected?@ChrisLoesch

— Original Pechanga #StopDisenrollment (@opechanga) May 6, 2021

It's going to make it harder for Jimmy Kimmel to find Mean Tweets to show now!

— Metal Lord (@fyredogg89) May 6, 2021

Nextdoor has that. It's every bit as stupid as it sounds.

— S̀͡҉̴͏ḩ̶a̷̛͘͟͏k͡҉͏y҉̵̵̵̕ (@extrashaky) May 6, 2021

Instagram already has this. It makes people afraid to post.

— Merlin’s Magic (@MerlinsFoot) May 6, 2021

Pussies. Excuse my French. But all liberals are. They cry and cry over everything possible. Their insecurities are frightening. Thanks to all the helicopter parents and participation trophies.

— Marty Kehoe (@marty_kehoe) May 6, 2021

You can’t be mean? What’s the point of Twitter ?

— rudy valley (@RudyValley) May 6, 2021

Twitter is such a dumb company it sometimes physically hurts watching this clown show. Would this be detected as mean? I can't wait to find out. Bring it you dummies.

— Chris BTC 🇸🇻 "Fix the money, fix the world" (@got_integrity) May 6, 2021

Let me guess, the algorithms already predict political preference and flag accordingly. “Mean” only applies to one side and/or those with differing opinions. Brilliant!

— Eran Quinlan (@EranQuinlan) May 6, 2021

What if I identify as mean?

— Ashley H. (@Renegaderunner2) May 6, 2021

I'm gonna have to click so many times when I reply to liberals…..

— WTF is wrong with people? (@O341Marine) May 6, 2021

So far, there’s nothing stopping you from posting your “mean” tweet; Twitter’s just stepping in and giving you a chance to think about it first.

Related:

Twitter experimenting with a prompt to keep you from saying things you don’t mean when things get heated https://t.co/EZHQGtqEZN

— Twitchy Team (@TwitchyTeam) May 5, 2020

Join the conversation as a VIP Member